EDITOR’S NOTE

Hey there 👋

Most of us marketers have asked an AI tool at some point to write a product description, a campaign brief, or a customer email, only to get back something that sounds plausible but is wrong.

You tweak the prompt, try again, and eventually give up and write the thing yourself.

That failure is a context problem where the AI didn’t know enough about your brand, product, or customers to get it right, so it had no way to find it on its own.

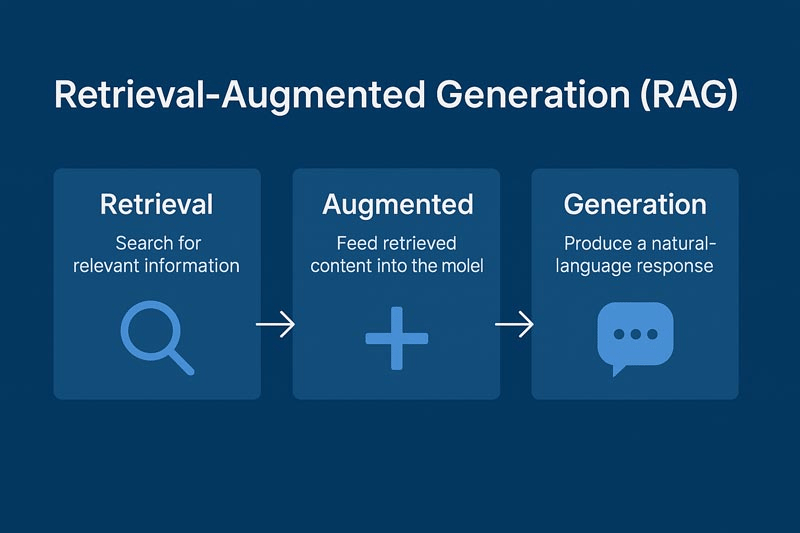

That’s the gap that Retrieval-Augmented Generation (RAG) was designed to close.

RAG is the architecture that lets AI systems pull in the right information at the right moment before generating a response. The AI first searches a database of documents, finds the most relevant chunks, and then uses those as context to craft its response.

That means the AI can provide answers grounded in up-to-date, specific data (such as your company docs, a product database, or recent articles) without needing to retrain the entire model. It reduces hallucination and keeps answers factual.

For marketers, understanding how RAG works is the difference between tools that scale your output and tools that create expensive cleanup work.

Let’s go! 🚀

TL;DR 📝

RAG solves the “stale knowledge” problem. Most AI models have a training cutoff date, but RAG lets them pull from live, company-specific sources before generating a response.

Context determines output quality. The same AI model produces wildly different results depending on what it retrieves before answering.

Your knowledge base is the variable that matters. Garbage in, garbage out applies here more than anywhere else in your AI stack.

NEWS YOU CAN USE 📰

What is RAG (Retrieval-Augmented Generation)? Retrieval-Augmented Generation (RAG) is the process of optimizing the output of a large language model, so it references an authoritative knowledge base outside of its training data sources before generating a response. It is a cost-effective approach to improving LLM output so it remains relevant, accurate, and useful in various contexts. [Source: AWS]

Google rolls out a native Gemini app for Mac. Google announced on Wednesday that it’s introducing a native Gemini app for Mac, catching up to rivals like OpenAI and Anthropic, which have had Mac apps for quite some time. “Now, you can bring up Gemini from anywhere on your Mac with a quick shortcut (Option + Space) to get help instantly, without ever switching tabs.” [Source: TechCrunch]

Corporate AI adoption is getting real. About 25% of S&P 500 companies now report quantifiable AI results, up from 13% last year. Marketing and product teams are showing faster production cycles and improved campaign performance. [Source: Axios]

RAG FOR MARKETERS: WHY CONTENT IS EVERYTHING 🧠

The AI models powering tools like ChatGPT and Claude are trained on massive datasets up to a certain date, and after that, they’re frozen. They don’t know about your new product launch, your updated brand guidelines, or what your competitor announced last Tuesday.

If you ask them something that requires that knowledge, they’ll either hallucinate or admit ignorance.

RAG fixes this with a two-step process: before the AI generates anything, it retrieves relevant information from an external source: a database, a document library, a CRM, or a website. That information is then fed into the prompt as context, and the model generates a response based on both what it learned during training and what it just retrieved.

Think of it like the difference between asking a new hire to write a client proposal versus asking a senior account manager who just reviewed the client’s full history before sitting down to write.

The Architecture of a RAG System

Source: Conversed AI

1. The Knowledge Base

This is where your information lives and the sources the AI can pull from. For a marketing team, this might include brand guidelines, product documentation, past campaign performance data, CRM records, and blog archives.

The quality of this layer is the single biggest determinant of output quality.

An AI pulling from an outdated, poorly organized knowledge base will produce outdated, poorly organized content.

In practice:

Most teams don’t need to build a RAG system from scratch. Instead, they can rely on tools they already use: Notion, Google Drive, or Confluence as structured document hubs, HubSpot or Salesforce for CRM-driven personalization, Airtable for organizing campaign and content data, and their CMS (such as Webflow or WordPress) as the foundation for blog and SEO content.

The key here is how clean, structured, and accessible the data is.

2. The Retrieval Engine

Source: LlamaIndex

When a query comes in, the retrieval engine searches the knowledge base for the most relevant pieces of information. Modern retrieval engines use semantic search to find content that’s conceptually relevant, even if the exact words don’t match.

In practice:

This is where most of the “RAG magic” happens. Tools you can use here include:

Pinecone/Weaviate/Supabase Vector: vector databases for semantic search.

OpenAI embeddings/Cohere: embeddings to turn your content into searchable vectors.

LangChain/LlamaIndex: frameworks that connect your data to LLMs.

Glean/Algolia: internal knowledge search across tools.

3. The Generator

Once relevant context has been retrieved, it gets passed to the language model alongside the original query. The model generates a response grounded in that retrieved information. Its job is synthesis: taking retrieved facts and crafting them into coherent, useful output.

In practice:

This is typically handled by:

ChatGPT (with custom GPTs or API)

Claude

Gemini

The differentiation is the context you feed into it.

Why This Matters for Marketers

1. Brand Consistency at Scale

One of the biggest challenges of deploying AI for content production is drift. The AI generates content that sounds like AI, not like your brand. RAG solves this by retrieving your brand voice guidelines and approved messaging examples before generating anything new.

2. Always-current Customer Communications

Standard AI models don’t know your current pricing or the campaign you launched this week, but RAG-enabled tools do, because they retrieve that information before generating a customer-facing response. This is why HubSpot’s AI content tools, connected to your CRM and content library, produce more relevant output than a generic ChatGPT prompt.

3. Personalization at Scale

Genuine personalization requires knowing something specific about the person you’re communicating with. RAG makes this possible by retrieving individual customer data (purchase history, support interactions, browsing behavior, content preferences) and feeding it into the content generation process.

Netflix’s recommendation system isn’t a RAG system in the pure sense, but the principle is identical: retrieve what’s relevant about this specific user, then generate what’s relevant for them.

4. Search Visibility as AI Tools Evolve

Google’s AI Overviews, ChatGPT search, and Perplexity all retrieve content before generating answers. The brands cited in AI-generated search results have well-structured, authoritative content that retrieval engines can find and use. RAG is increasingly how AI-powered search surfaces content to users.

RAG Workflow Example

A marketer asks their AI assistant: “Write a LinkedIn post about our new data security feature, targeted at CTOs in financial services.”

Without RAG, the AI writes something generic, but with RAG, the system first retrieves the product feature documentation, the company’s brand voice guidelines, recent LinkedIn posts that performed well with a technical audience, and regulatory language commonly used in financial services content. It then generates a post grounded in all of that context: specific, on-brand, and audience-appropriate.

Tools that can do this today (without custom engineering):

ChatGPT (Custom GPTs with uploaded knowledge)

Claude Projects (document-based context)

HubSpot AI (CRM + content + automation)

Jasper AI (brand voice + content memory)

Copy.ai (workflow-based content generation)

Notion AI (connected workspace knowledge)

What You Should Do Now

You likely don’t need to build a RAG system from scratch.

Audit your knowledge base: The tools you’re using are only as good as the information you’ve given them access to. Are your brand guidelines in a format the AI can retrieve? Is your product documentation current and well-structured?

Look for RAG-enabled tools: When evaluating AI marketing tools, ask whether they can connect to your existing data sources. “Connected to your CRM” or “trained on your documents” means the capability we’re describing here is already built in.

Treat your content library as infrastructure: The better organized your internal knowledge base, the better every AI tool that pulls from it will perform. This is the compound return most marketing teams are leaving on the table.

Keep the knowledge base current: A brand guidelines document last updated 18 months ago is actively degrading your AI output quality. Set a quarterly review cadence and treat it like a live asset.

Start simple before going technical: If you’re early, use ChatGPT to upload docs, Notion AI across your workspace or connect HubSpot AI to your CRM. If you’re scaling, layer in vector search (Pinecone, Weaviate), use LangChain or LlamaIndex to orchestrate workflows, and build internal tools around your highest-value use cases (sales content, SEO content, customer support)

THIS WEEK’S PROMPT 🤖

Use this prompt with your preferred LLM to audit your current content assets for RAG-readiness and build a prioritized knowledge base improvement plan.

The Scenario:

You are the Head of Content for a B2B SaaS company that has recently deployed an AI content assistant. Despite strong adoption, outputs are frequently off-brand, outdated, or lacking product-specific depth. You suspect the issue is that your AI tool doesn’t have access to the right knowledge sources.

Current Situation:

We use [insert AI tool] for content generation, connected to our CMS but not our CRM

Our brand guidelines exist as a PDF, last updated 18 months ago

Product documentation lives in Notion, but is partially outdated

We have two years of high-performing blog posts, but they aren’t tagged or categorized

Customer personas were last updated before our most recent product pivot

Support tickets and customer feedback are housed in Intercom, but not accessible to our content tools

Questions:

Based on this situation, what are the highest-impact knowledge gaps affecting the quality of our AI content?

Which knowledge sources should we prioritize making AI-accessible first, and why?

What format and structure should each knowledge source be in to maximize retrieval accuracy?

What is a realistic 90-day roadmap for building a RAG-ready knowledge base for a content team of five people?

What processes should we put in place to keep the knowledge base current as our product and brand evolve?

How do we measure whether our RAG improvements are actually improving AI output quality?

Based on our setup ([AI tool] connected to CMS only), outline practical ways to implement RAG without engineering support.

Integration Approach

How can we connect or simulate RAG using existing tools (native features, uploads, connected docs)?

What no-code options (Zapier, Make, internal search) can bridge gaps?

If direct integration isn’t possible, what are the best workarounds?

Recommended Setup (suggest 2–3 options)

Low effort (no-code)

Mid-level (structured + scalable)

More advanced (still lightweight)

Include tools, effort level, and limitations.

Data Flow (Simple)

Explain how a query works in practice:

User prompt > retrieve data > inject context > generate output

Content Prep

For key sources (brand, product, blog, CRM):

How to structure + chunk content

What metadata/tags to add

List 5 actionable steps to improve RAG immediately.

TOOLS WE USE ⚒️

These are the most popular AI tools we use at Rise Up Media. If you're not using them already, they're worth a look.

Claude Cowork: Claude Code but for non-devs (like us!)

Manus AI: General-purpose AI agent we love (and use to create this newsletter)

n8n: Open-source automation (if you like that sort of thing)

Relevance AI: No-code create-your-own AI agents platform

OpusClip: Auto-clips long videos into shorts (and is really good at it)

Buffer: Manage all your socials (with a sprinkle of AI) in one place.

Full disclosure: some links above are affiliate links. If you sign up, we’ll earn a small commission at no extra cost to you.

WRAPPING UP 🌯

RAG is one of those concepts that sounds technical right up until the moment it clicks, then you see it everywhere.

Every time an AI tool gives you a response that feels suspiciously well-informed about your specific situation, RAG is probably involved. When it gives you something generic and useless, the absence of RAG is likely the culprit.

For marketers, the practical implication is that your AI outputs are a direct function of the context you’ve given your AI access to. In order to get the most out of AI, you need to feed LLMs with better-organized, more accessible internal knowledge.

Until next time, keep exploring the horizon. 🌅

Alex Lielacher

P.S. If you want your brand to show up in Google AI Mode, ChatGPT, and Perplexity, reach out to my agency, Rise Up Media. That's what we do.