EDITOR'S NOTE

Hey there 👋

Most marketing teams have built their AI stack opportunistically through a free trial here, a team member's recommendation there, a vendor demo at a conference that turned into a subscription nobody canceled.

Two years later, you've got 12 tools, three of them doing the same job, and a workflow that's more manual than it should be because none of them talk to each other.

The conversation in marketing circles now is almost entirely about which AI tools to add, but for most teams, the more pressing problem is what's cluttering their AI stack.

In this issue, we’ll walk you through a four-phase framework for auditing your current AI setup: mapping what you have, evaluating what's worth keeping, cutting what's redundant or orphaned, and filling the gaps that are quietly costing you performance.

Let's go! 🚀

TL;DR 📝

Most marketing AI stacks grew by accident: Tool-by-tool additions without a strategy add up to redundancy, wasted budget, and broken workflows.

A stack audit starts with use-case mapping: You need to know what jobs need doing before you can judge what's worth keeping.

Redundancy is the most common problem: Teams often have two or three tools doing the same job, none of which does it particularly well.

Gaps matter more than overlaps. The functions your stack doesn't cover at all (analytics, personalization, content ops) are where the biggest performance losses hide.

NEWS YOU CAN USE 📰

Google updates links within AI Overviews & AI Mode. Google announced five changes to how the search engine will show links and citations within its AI Search features – AI Mode and AI Overviews. These changes aim to make it “easy for you to connect with authentic voices and explore useful information across the web.” [Source: Search Engine Land]

Transform product discovery with Experience Search: AI that understands your shoppers. Shoppers don’t think in keywords. They think in intent, needs, and moments. Yet many commerce search experiences still rely on outdated keyword-based systems, leading to poor relevance, missed opportunities, and abandoned journeys. Experience Search changes that. [Source: Dynamic Yield]

Google tests Remy AI agent for Gemini as focus turns to user control. Google is testing Remy, a new AI personal agent for Gemini, according to Business Insider. The tool is designed to take action for users in their work and daily tasks. Remy is being tested in a staff-only version of the Gemini app. Remy is part of Google’s broader work to expand Gemini beyond chat-based responses. [Source: AI News]

Agentic AI for marketing: Reimagine end-to-end customer experiences. Agentic AI represents the next phase of marketing performance, enabling organizations to connect insights, decisions, and execution across the customer experience. As customer journeys become more complex and expectations rise, enterprises need systems that can operate across data, content, and workflows in a coordinated way. [Source: CIO]

200+ AI Side Hustles to Start Right Now

AI isn't just changing business—it's creating entirely new income opportunities. The Hustle's guide features 200+ ways to make money with AI, from beginner-friendly gigs to advanced ventures. Each comes with realistic income projections and resource requirements. Join 1.5M professionals getting daily insights on emerging tech and business opportunities.

THE AI MARKETING STACK AUDIT: A FRAMEWORK FOR WHAT TO KEEP, CUT & BUILD 🔍

Source: Dynamic Yield

The average B2B marketing team now pays for 12 to 15 software tools, with AI-specific subscriptions making up a growing portion of that number. The problem is that most of those tools were added reactively without any review.

The audit framework below works in four phases: map, evaluate, cut, and build.

Phase 1: Map Your Current Stack Against Use Cases

Before you evaluate any individual tool, you need to know what jobs your marketing operation requires.

Start by listing every AI tool your team currently has access to: paid, free-tier, and trial. Include the tools individuals use on their own accounts that feed into team output. Then, for each tool, answer three questions:

What is it supposed to do?

Who actually uses it?

How often?

Next, build a use-case map.

The core use cases for most marketing teams fall into eight categories: content creation, content editing and QA, SEO and search visibility, paid media optimization, email and CRM personalization, analytics and reporting, social media management, and customer research. Map each tool you have to one or more of these categories.

What you'll likely find: several categories are over-served (you have four tools touching content creation), and at least two categories have nothing assigned to them at all.

Phase 2: Evaluate What You Actually Have

Once the map exists, run each tool through a simple three-question evaluation:

Does it do its job better than the alternative? This includes the alternative of doing the task manually or using a different tool you already own. If the answer is no or unclear, it's a candidate for removal.

Does it integrate with the tools around it? A content tool that doesn't connect to your CMS, your CRM, or your analytics platform means someone is manually moving outputs. Manual handoffs are where time and accuracy go to die. If a tool sits in isolation, the burden of proof for keeping it is high.

Does more than one person use it? Tools that only one team member uses often reflect personal preference rather than team need. That's not always a problem, but it should be a conscious decision, not a default.

Grammarly is a good example of a tool that survives this evaluation for most teams. It integrates directly into writing environments, it's used across the team, and it adds genuine value to output quality without requiring a separate workflow.

A standalone AI image generator that one designer logs into twice a month is the opposite: limited use, no integration, and features duplicated by tools the team already pays for.

Phase 3: Cut What's Redundant or Orphaned

"Redundant" means two or more tools doing the same job with no meaningful differentiation. "Orphaned" means a tool that nobody can point to a specific, recurring output for.

Redundancy is where the easy budget wins are. If your team has ChatGPT, Claude, and Jasper all in play for content drafting, that's almost certainly not a deliberate choice.

Pick the one that fits your workflow best and cancel the others.

Orphaned tools are trickier because they often have a vocal advocate. The person who championed the tool will argue for keeping it, but the question is whether the team's current operation actually needs it. If removing it would require someone to change a behavior they currently have, keep it; if removing it would go unnoticed, cancel it.

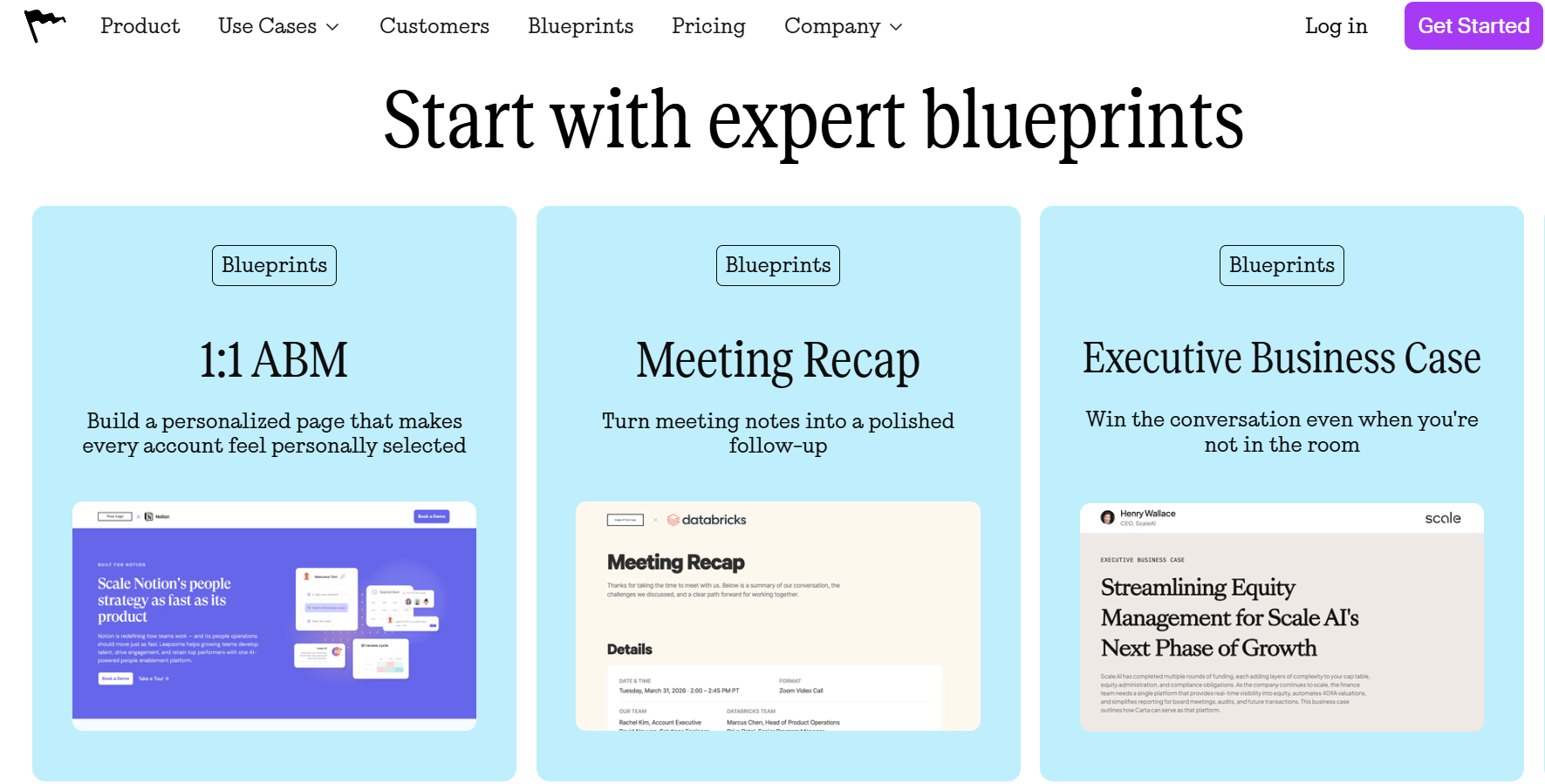

Phase 4: Spot the Gaps and Fill Them Intentionally

Source: Mutiny

After the cut, review the use-case map again and identify which categories have no assignments. For most teams, the most common gaps are:

AI-driven analytics and attribution. Most teams can generate content with AI, but can't yet use it to automatically interpret performance data. Tools like Tableau AI, Polymer, or Claude's data analysis capabilities can fill this gap, but only if it's designated as a responsibility, not a nice-to-have.

Personalization at scale. Generic email sequences and one-size landing pages are a performance problem. Tools like Mutiny (for website personalization) or Dynamic Yield can do for mid-market teams what enterprise teams have done for years. If your stack doesn't have a personalization layer, that gap is likely showing up in your conversion rates.

Content operations and workflow. There's often no tool managing how content moves from brief to draft to approval to publish. Platforms like Notion AI or Contentful with AI extensions help. Without something here, the stack produces outputs that pile up rather than ship.

When you fill a gap, the rule is: add one tool, confirm it integrates with at least two things you already use, and set a 90-day review date before treating it as permanent.

What a Lean, Integrated Stack Looks Like

Source: ActiveCampaign

The goal is a stack where every tool has a clear job, connects to what's around it, and is used by more than one person.

A marketing team doing this well typically runs something like this: one primary LLM for drafting and research (Claude or ChatGPT), one SEO platform (Ahrefs or Semrush), one content workflow tool (Notion AI or monday.com), one email platform with built-in AI (Klaviyo, ActiveCampaign, or HubSpot), and one analytics layer. Five tools, with each one doing a distinct job and talking to one other.

THIS WEEK'S PROMPT 🧠

Use this prompt with your preferred LLM to run a structured AI stack audit for your marketing team.

The Scenario

You are the Head of Marketing for a […] company with a team of […] people. Your team has accumulated AI tools over the past two years across content, SEO, email, and reporting. You want to audit what you have, identify redundancies, and build a more intentional setup.

The Prompt

"I need to run an AI marketing stack audit. I'll share my current tools and how we use them. For each one, help me evaluate and decide whether to keep, cut, or replace it. Then identify which core marketing use cases we have no tool coverage for. Finally, recommend a lean, integrated stack based on what we should keep and what we're missing.

Current Situation:

We have 11 AI tools in total across the team (insert tools here).

We spend roughly $2,400/month on AI subscriptions.

Our core functions are content, SEO, email marketing, and paid social.

Most tools were added individually rather than as part of a planned strategy.

We have no documented process for how tools connect to each other.

Questions:

What information do you need from me to run a proper audit? What should I inventory for each tool?

What are the eight core use cases a marketing AI stack should cover, and which ones are most commonly neglected?

How do I identify redundancy? What signals tell me two tools are doing the same job?

What criteria should I use to decide which tool to keep when two overlap?

How do I evaluate integration quality? What does 'well-integrated' actually look like in practice?

What does a lean, well-connected five-to-seven tool stack look like for a B2B SaaS marketing team of our size?

How do I build a 90-day review cycle to keep the stack from growing sideways again?"

TOOLS WE USE ⚒️

These are the most popular AI tools we use at Rise Up Media. If you're not using them already, they're worth a look.

Claude Cowork: Claude Code but for non-devs (like us!)

Manus AI: General-purpose AI agent we love (and use to create this newsletter)

n8n: Open-source automation (if you like that sort of thing)

Relevance AI: No-code create-your-own AI agents platform

OpusClip: Auto-clips long videos into shorts (and is really good at it)

Buffer: Manage all your socials (with a sprinkle of AI) in one place.

Full disclosure: some links above are affiliate links. If you sign up, we'll earn a small commission at no extra cost to you.

WRAPPING UP 🌯

The more useful question in this stack is what you already have and whether it's working. Teams with a mapped stack against actual use cases, cut what didn't pull weight, and build integrations between what remained.

An audit isn't a one-time fix. The stack will grow again, new tools will appear, new team members will bring preferences, and new use cases will emerge. The discipline is in building a review habit.

Until next time, keep exploring the horizon. 🌅

Alex Lielacher

P.S. If you want your brand to show up in Google AI Mode, ChatGPT, and Perplexity, reach out to my agency, Rise Up Media. That's what we do.